DNA Melting: Model function and parameter estimation by nonlinear regression

Overview

In addition to the double stranded DNA concentration Cds(t), ΔH°, and ΔS°, the measured fluorescence voltage, Vf,measured(t), depends of several factors, including:

- dynamics of the temperature cycling system

- thermal quenching of the fluorophore

- photobleaching

- responsivity and offset of the instrument

- binding kinetics of the dye

The goal is to write a model for Vf,measured that takes these effects into account and use nonlinear regression to estimate the parameters. The model proposed here adheres to Dr. George E. P. Box's excellent advice on modeling, in that it is both wrong and useful. Some of the assumptions are more dubious than others.

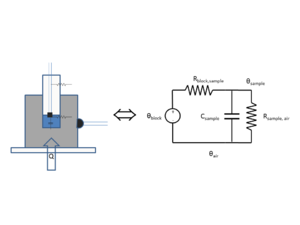

Sample temperature

The DNA melter measures the temperature of the metal heating block, not of the sample. Because the glass vial has high thermal resistance, the sample temperature significantly lags the heating block temperature. A simple, lumped-element circuit model can predict the sample temperature to within about 0.5°C based on the block temperature history.

The state variables in a thermal circuit model are temperature T and heat flow q. A fundamental assumption of lumped element models is that each element has a single value of both state variables. Because of this assumption, lumped element models only work well if the bodies you are modeling have a nearly uniform temperature. Components that are small in size, have high thermal conductivity, and have low heat transfer coefficients are most suitable for lumped element modeling. These attributes are summarized by the Biot number, Bi=hl/k, where h is the heat transfer coefficient, l is the characteristic length of the body (volume divided by surface area), and k is the thermal conductivity of the material. Bodies with Biot numbers less than 1 are good candidates for lumped element models. The Biot number for the heating block is on the order of 6x10-3, assuming h=50 W/m2 K, l=3 cm, and k=235 W/m K. This is much less than one, so the heating block is a good candidate for a lumped element model. The Biot number for the glass vial is about 0.03 (h=10.5 W/m2 K, l=3 mm, and k=1.05 W/m K).

The DNA melter measures the heating block's temperature directly. The heating block has a much higher thermal capacity than the sample, so a temperature source is a good model for the block.

The model neglects the heat capacity of the vial.

The thermal circuit model for the heating system is shown in the figure on the right. The circuit is a first-order, low-pass filter. In this circuit, a step increase in block temperature causes the sample temperature to rise exponentially to a peak value of Kthermal with time constant τthermal.

The transfer function of the low-pass filter has two parameters:

- $ \frac{\hat{T}_{sample}(\omega)} {\hat{T}_{block}(\omega)}=\frac{K_{thermal}}{j \omega \tau_{thermal} + 1} $.

- Kthermal is the low frequency gain of the system.

- τthermal is the time constant.

Implementation

MATLAB includes several functions for simulating continuous-time, linear, shift-invariant (CTLSI) systems. You can write the transfer function of a CTLSI system as the ratio of two polynomials in $ s $, where $ s=j\omega $. For example, the transfer function of a low-pass filter can be expressed in the form $ H(\omega)=\frac{1}{\tau s+1}=\frac{1}{\tau j \omega+1} $. The MATLAB function tf constructs a software object that represents a CTLSI transfer function. tf takes two arguments that specify the coefficients of the numerator and denominator polynomials. The arguments must be formatted as vectors containing the coefficients of $ s $ in order of decreasing power. For example, the transfer function $ \frac{1}{60 s + 1} $ can be created by:

>> exampleTransferFunction = tf( 1, [ 60 1 ] )

exampleTransferFunction =

1

--------

60 s + 1

Continuous-time transfer function.

Several functions operate on continuous-time transfer function objects. For example bode and impulse generate bode and impulse response plots as expected. (Perhaps the Vice President of Inconsistent Naming at MathWorks was out sick the day those functions were named.) There is also a CTLSI simulator called lsim that will be useful for deriving the sample temperature from the block temperature using a lumped-element model of the thermal low-pass system realized by the heating block, glass vial, and sample. (Seems like the Director of Inconsistencies was back in the office when lsim got named.)

The SimulateLowPass MATLAB function below uses lsim to produce the simulated output of a low-pass filter given its time constant, a vector of input values, and an optional vector of time values. If supplied, the time vector must be the same length as the input vector (i.e. each time value corresponds to one input value). If no time vector is passed, the function generates a time vector assuming that the input values are sampled uniformly at ten times per second, starting at $ t=0 $. The DNA melter software produces ten samples per second.

function SimulatedOutput = SimulateLowPass( TimeConstant, InputData, TimeVector )

if( nargin < 3 )

TimeVector = (0:(length(InputData)-1))/10;

end

transferFunction = tf( 1, [TimeConstant, 1] );

initalTemperature = InputData(1);

InputData = InputData - initalTemperature;

SimulatedOutput = lsim( transferFunction, InputData, TimeVector' ) + initalTemperature;

end

The function subtracts off the initial value before running the simulation and than adds the initial value back in after the simulation. This is a cheesy way to handle initial conditions — setting the temperature zero point to be equal to the initial temperature. This is equivalent to assuming that the block has been at its initial temperature during the time interval $ t = [ -\infty, 0 ) $.

Photobleaching

Excited dye molecules can react chemically with compounds in their environment and permanently lose their ability to fluoresce — a phenomenon called photobleaching. LED and ambient light illuminate the LC Green dye for 15 or more minutes over the course of a single melting and annealing cycle, which results in measurable photobleaching. The effect of photobleaching can be approximated by a mathematical model.

Assumptions

- The dye is divided into two populations, bleached and unbleached, with concentrations S and S

- The initial dye concentration is S + S.

- Only dye molecules bound to dsDNA may be excited.

- Only excited fluorophore molecules bleach.

- An excited fluorophore will either bleach with probability p or return to the ground state with probability 1-p.

- The constant 1-p encompasses all of the mechanisms by which the fluorophore returns to the ground state unbleached, including fluorescence, phosphorescence, and non-radiative relaxation.

- Bleaching is irreversible.

- The number of molecules in the excited state is proportional to fluorescence.

- Bleached and unbleached molecules bind to dsDNA with the same affinity.

Model

Under these assumptions, the bleaching rate is proportional to fluorescence.

- $ \frac{\partial \bar{S}}{\partial t} = K_{bleaching} f(t) $

Kbleaching encompasses several constants, including $ p $, illumination intensity, optical gain of the instrument, and lock-in amplifier gain.

Setting the initial dye concentration to 1, the fraction of unbleached LC Green can be calculated by integrating:

- $ S(t)=1-\bar{S}(t)=1-K_{bleaching}\int_0^t f(t) \mathrm{d}t $

Implementation

A simple way to approximate an integral in discrete time is a cumulative sum: $ \int_0^t f(t) \approx \sum_{n=1}^{f F_s}f(n T_s) $, where $ F_s $ is the sample rate. This works particularly well when the signal changes slowly relative to the sample period. It's a good bet that the bleaching time constant is much longer than the 0.1 s sample period. MATLAB has a built-in function for computing cumulative sums called cumsum. Here is an example:

>> cumsum( 1:10 )

ans =

1 3 6 10 15 21 28 36 45 55

cumsum will generate a very good numerical approximation to $ \int_0^t f(t) \mathrm{d}t $.

Thermal quenching

LC Green I fluoresces less efficiently at higher temperatures. The decrease in fluorescence is approximately linear. Defining the dye efficiency at the initial temperature to be 1, quenching can be modeled by:

- $ \left . Q(t)=1-K_{quench}[T_{sample}(t)-T_{sample}(0)]\right . $

Instrument gain and offset

There are several optical and electronic gain factors built into the DNA melter that determine the instrument responsively, ∂Vf,measured / ∂ Cds. In addition, Vf,measured may be offset from zero when Cds concentration is zero.

- Kgain is equal to the change in Vf,measured divided by the change in fraction of dsDNA. This is assumed to be constant.

- Koffset is equal to the value of Vf,measured when the dsDNA concentration is zero.

Expression for model Vf,model

- $ \left . V_{f,model}(t) = K_{gain} S(t) Q(t) C_{ds}(T_{sample}(t), \Delta H^\circ, \Delta S^\circ) + K_{offset}\right . $

Cds is the model melting curve produced by the DnaFraction function from part 1 of the lab. Differences between the model and the observations are precisely visualized on a residual plot, described below.

Finding double stranded DNA fraction from raw data

The inverse function of the melting model with respect to Vf,measured(t) is helpful to visualize discrepancies between the model and experimental data caused by random noise in Vf,measured and systematic error in the model Vf,model. The function,

- $ C_{ds,inverse-model}(V_{f,measured}(t)) = \frac{V_{f,measured}(t) - K_{offset}} {K_{gain} S(t) Q(t)} $,

is itself a model. This model estimates the concentration of double stranded DNA based on the observations $ V_{f,measured}(t) $ and the models for bleaching and quenching.

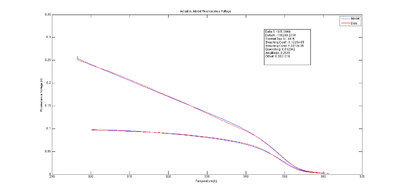

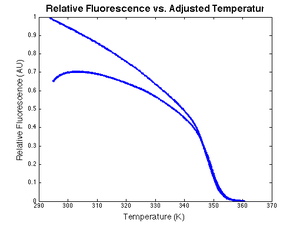

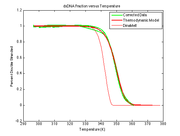

The estimated melting curve may be directly compared with simulations, measurements or other predictions of the true melting curve. The plot at right shows an example of Cds,inverse-model(t) versus Tsample(t). The estimated melting curve is shifted to the right compared to the simulated melting curve, possibly due to systematic error in the sample temperature model. The estimated melting curve also serves as a comparison to the thermodynamic model developed in DNA Melting Thermodynamics, or to any other independent measurement or model of the melting curve, i.e., the concentration of dsDNA vs sample temperature.

Residual plot

Observed values differ from predicted values because of noise and systematic errors in the model. Residuals are the difference between experimental observations and model predictions, Vf,model−Vf,measured. Ideally, the residuals should be random and identically distributed.

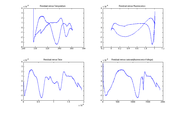

The plots at right show Vf,model−Vf,measured, versus temperature, time, fluorescence, and the cumulative sum of fluorescence. The residuals are clearly not random and identically distributed. This suggests that the model does not perfectly explain the observations. The scale of the plot is much smaller than the data plot — about one percent of the data scale.

A perfect model might require dozens of added parameters and additional physical measurements.

Plotting the residuals versus different variables can help suggest what factors are not modeled well.

Testing the fitting algorithm

The input to the model function, Vf,model, is the block temperature (from which sample temperature is derived) and the model parameters (to be determined). The model function is suitable for use with the Matlab nlinfit function (or other fitting routines). The input to nlinfit will then be the block temperature, fluorescence signal, the model function, and initial values of the model parameters. The output of nlinfit are the fitted parameter values.

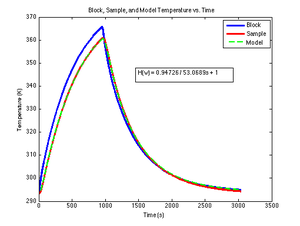

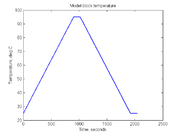

In Part II, the block temperature will be controlled to produce a ramp up from room temperature to 95 degrees then back down to room temperature.

% generate temperature ramp

Tmin = 25;

Tmax = 95;

Temp = [ (0:0.1:900)*((Tmax-Tmin)/900) + Tmin, Tmax*ones(1,1200), ...

(0.1:0.1:900)*((Tmin-Tmax)/900) + Tmax, Tmin*ones(1,1200) ];

time = (1:length(Temp))*0.1;

plot(time,Temp)

xlabel('Time, seconds')

ylabel('Temperature, deg C')

title('Model block temperature')

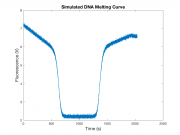

To test your curve fitting code, create a synthetic data set with some added random noise:

dnaConcentration = 30E-6;

deltaS = -500;

deltaH = -180E3;

noise = 0.05; % Roughly 5% noise

F = DnaFraction( dnaConcentration, Temp + 273, deltaS, deltaH );

F = F + noise*randn(size(F));

plot(time,F)

xlabel('Temperature, deg C');

ylabel('Fluorescence Signal');

title('Simulated DNA Melting Curve');

Even at a relatively large amplitude, random noise by itself should not appreciably affect the fitted parameter values; however, noise will generally contribute to uncertainty in the fit values. This uncertainty is seen in the parameter confidence intervals. Use the Matlab function nlparci to obtain the 95% confidence intervals from the results returned by nlinfit, e.g.,

[fitValues, residual, ~, COVB, ~] = nlinfit(Temp, F, fitFunc, beta0, fitOptions);

CI = nlparci(fitValues, residual, 'covar',COVB);

where CI will be an N×2 array of values where N is the length of fitValues.

Comparing the known and unknown samples

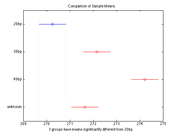

One possible way to compare the unknown sample to the three knowns is to use Matlab's anova and multcompare functions. Anova takes care of the difficulties that arise when comparing multiple sample means using Student's t-test.

The following code creates a simulated set of melting temperatures for three known samples and one unknown. In the simulation, each sample was run three times. The samples have melting temperatures of 270, 272, and 274 degrees. The unknown sample has a melting temperature of 272 degrees. Random noise is added to the true mean values to generate simulated results. Try running the code with different values of noiseStandardDeviation.

% create simulated dataset

noiseStandardDeviation = 0.5;

meltingTemperature = [270 270 270 272 272 272 274 274 274 272 272 272]

+ noiseStandardDeviation * randn(1,12);

sampleName = {'20bp' '20bp' '20bp' '30bp' '30bp' '30bp' '40bp' '40bp' '40bp'

'unknown' 'unknown' 'unknown'};

% compute anova statistics

[p, table, anovaStatistics] = anova1(meltingTemperature, sampleName);

% do the multiple comparison

[comparison means plotHandle groupNames] = multcompare(anovaStatistics);

The multcompare function generates a table of confidence intervals for each possible pair-wise comparison. You can use the table to determine whether the means of two samples are significantly different. Output of multcompare is shown at right. If your data has a lot of variation, you might have to use the options to reduce the confidence level. (Or there might not be a significant difference at all.) Note that multcompare has a default confidence level of 95% (alpha = 0.05). One way to assess how confident you are in your sample identification is by finding the lowest "alpha" value needed to identify your sample.

Consider devising a more sophisticated method that uses both the ΔH° and ΔS° values, instead.

</div>