Assignment 2 Part 1: Noise in images

Overview

Acquiring a digital image is essentially an exercise in measuring the intensity of light at numerous points on a grid. Light intensity measurements are subject to noise sources that limit the precision of images. In other words, there is a difference between what you measure and the actual value of the physical quantity you were trying to measure. Mathematically, this can be stated as: $ M=Q+\epsilon $, where $ M $ is the measured value, $ Q $ is the true (unknowable) value of the quantity (light intensity in this case), and $ \epsilon $ is the measurement error. In this part of the assignment, you will develop a software model for the noise sources in a digital image.

In the classical model of electromagnetism, light intensity is a continuous quantity that could in theory be measured with arbitrary precision. It turns out that the quantum nature of light has fundamental implications

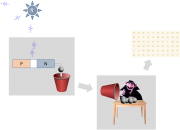

The figure on the right depicts a (very) simplified model of digital image acquisition. In the diagram, a luminous source stochastically emits $ \bar{N} $ photons per second. A fraction $ F_O $ of the emitted photons lands on a semiconductor detector. Incident photons cause little balls (electrons) to fall out of the detector. The balls fall into a red bucket. At regular intervals, the bucket gets dumped out on to a table where the friendly muppet vampire Count von Count counts them. The process is repeated for each point on a grid.